Ternary Moral Logic Integration with NVIDIA's AI Ecosystem

A comprehensive technical analysis of integrating ethical governance into AI hardware and the feasibility of triadic processor architectures

Executive Summary

Strategic Imperative

TML integration represents a strategic imperative for NVIDIA to maintain AI market leadership through architecturally-enforced ethical accountability.

Technical Viability

A phased approach from software governance to dedicated coprocessors enables feasible implementation with manageable risk.

Critical Risk

Recent RCE vulnerabilities in Triton and Merlin demonstrate the insufficiency of software-only safety measures.

This report concludes that integrating Ternary Moral Logic (TML) into NVIDIA's AI ecosystem is technically viable and represents a strategic imperative to address the critical limitations of software-only AI safety. By embedding TML, NVIDIA can architecturally enforce ethical accountability, creating a "glass box" AI with immutable, auditable decision-making logs.

The investigation into a future triadic processor architecture concludes that while a full-scale ternary core presents significant long-term engineering challenges, a more immediate and highly feasible path lies in the development of a dedicated governance coprocessor or a triadic execution unit integrated within the existing GPU architecture.

Proposed Architecture Blueprint

Dual-Lane Processing Model

The architecture functions as a parallel governance layer with separate inference and governance lanes to maintain performance while ensuring ethical oversight.

Inference Lane

- • High-speed AI model execution

- • CUDA/TensorRT optimization

- • Minimal latency operations

- • Real-time response capability

Governance Lane

- • Asynchronous ethical processing

- • Sacred Pause evaluation

- • Moral Trace Log generation

- • Blockchain anchoring

Core Component Specifications

Sacred Pause Controller

Lightweight detection of morally complex scenarios with non-blocking signal processing and ethical evaluation triggers.

Always Memory Backbone

Cryptographic sealing with Merkle trees and blockchain integration for immutable, tamper-evident logging.

Hybrid Shield

Dual-layer integrity combining hash-chains with public blockchain anchors for verifiable accountability.

Hardware Focus: The Triadic Processor Question

Conceptual Architecture

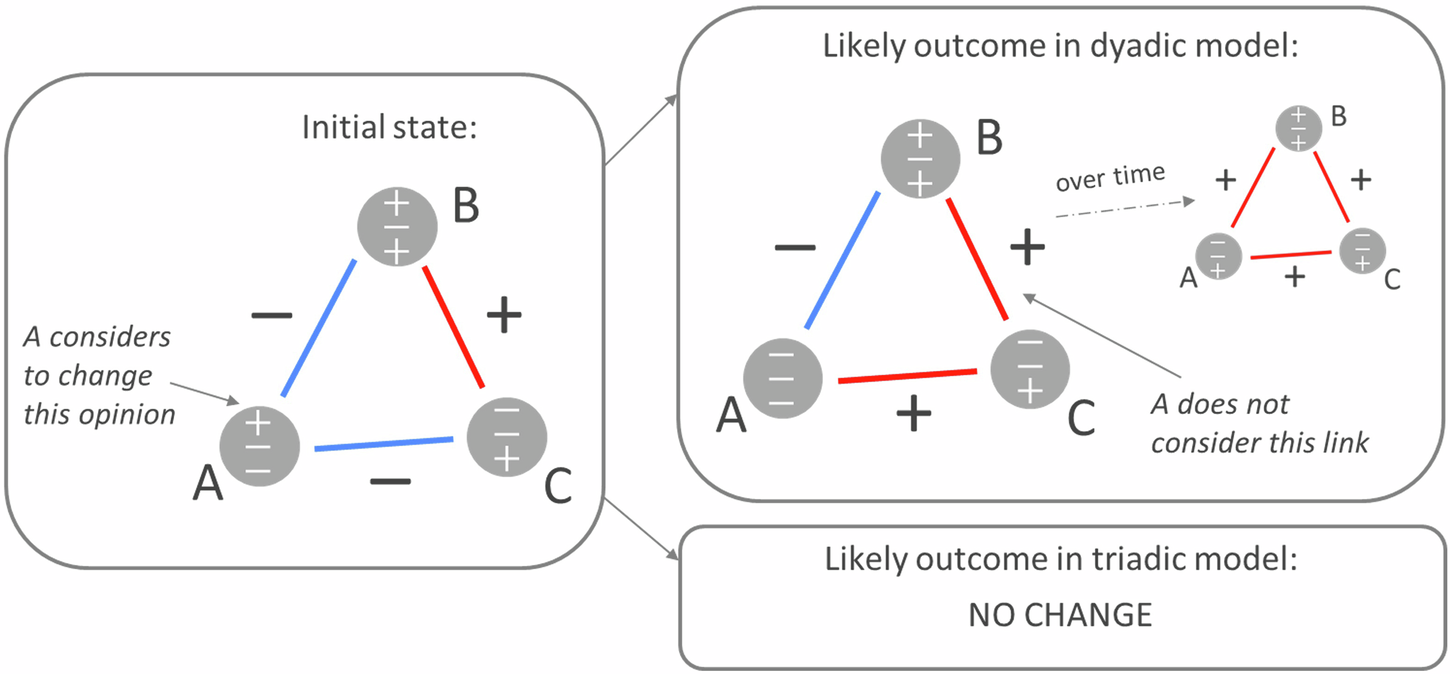

The triadic processor introduces a third logical state beyond traditional binary, creating a hardware-level "Sacred Pause" that transforms software governance checks into physical architectural reality.

Tri-State Logic Implementation

- • Proceed (+1): Positive voltage state

- • Hesitate (0): High-impedance state

- • Refuse (-1): Negative voltage state

Electrical Feasibility

The electrical realization of tri-state logic is well-understood in digital electronics, with high-impedance (Hi-Z) states providing a robust implementation pathway.

Implementation Approaches

- • Multi-level voltage schemes

- • High-impedance state control

- • CMOS transistor reconfiguration

| Option | Description | Pros | Cons | Feasibility |

|---|---|---|---|---|

| Dedicated Governance Coprocessor | Separate specialized chip for ethical governance functions | Least disruptive, clear separation, lower risk | Communication latency, attack surface | High |

| Triadic Execution Unit within GPU SM | Embedded tri-state logic in Streaming Multiprocessors | High security, low latency, fine-grained control | Immense engineering challenges, ecosystem overhaul | Medium |

| Full Ternary Core Architecture | Complete redesign based on ternary logic | Profound benefits, inherently ethical design | Massive complexity, complete ecosystem disruption | Low |

Recommended Implementation Path

Software Layer Integration

Integrate TML as software runtime governance in CUDA and TensorRT (6-12 months)

Hardware-Assisted Governance

Develop dedicated TML Coprocessor for cryptographic and ethical reasoning (18-24 months)

Full Triadic Core Integration

Explore complete triadic architecture with hardware-enforced ethics (3-5 years)

Why Binary + Software Safety is Insufficient

Critical Vulnerabilities

Recent discoveries of Remote Code Execution (RCE) flaws in NVIDIA's Triton Inference Server and Merlin framework demonstrate the brittleness of software-only safety measures.

CVE-2025-33213 & CVE-2025-33214: Critical vulnerabilities allowing arbitrary code execution and complete bypass of safety mechanisms. [36]

Opacity & Bypassability

Software governance layers are inherently vulnerable to bypassing, disabling, or exploitation through configuration errors or malicious tampering.

"ShadowMQ" vulnerability: Insecure code patterns propagated across multiple AI frameworks including TensorRT-LLM, creating systemic risk. [35]

Core Limitations of Software-Only Governance

Technical Deficiencies

- • Opacity: Black box decision-making processes

- • Bypassability: Vulnerable to configuration changes

- • Mutability: Logs can be altered or deleted

- • Non-determinism: Inconsistent enforcement

Operational Impact

- • Regulatory Risk: Non-compliance with EU AI Act

- • Liability Exposure: Unclear responsibility chains

- • Trust Deficit: Public and enterprise skepticism

- • Market Limitations: Exclusion from critical sectors

TML Integration into NVIDIA's Current Stack

| TML Pillar | Integration Platform(s) | Key Function |

|---|---|---|

| Sacred Pause | CUDA, TensorRT | Hardware/software checkpoint for ethical evaluation before inference operations |

| Always Memory | CUDA, TensorRT | Immutable, cryptographically sealed logging of all AI actions and evaluations |

| Moral Trace Logs | Omniverse, Clara | Detailed, human-readable record of AI's reasoning process for high-value applications |

| Human Rights Pillar | DRIVE, Robotics | Hard-coded constraints prioritizing human safety in physical-world AI systems |

| Earth Protection Pillar | DRIVE, Robotics | Constraints and optimization for environmental sustainability in AI operations |

CUDA/TensorRT Integration

The "Sacred Pause" would be implemented as CUDA extensions or kernel launch qualifiers, while TensorRT's graph-based execution provides natural insertion points for moral evaluation.

Integration Points

- • CUDA kernel launch hooks

- • TensorRT execution context

- • Layer optimization passes

- • Memory management callbacks

Platform-Specific Integration

Specialized platforms require tailored TML implementations for domain-specific ethical considerations and safety requirements.

Platform Requirements

- • DRIVE: Real-time safety constraints

- • Clara: Patient privacy preservation

- • Omniverse: Digital asset integrity

- • Robotics: Physical world safety

Performance, Privacy, Storage & Bottlenecks

Performance Targets

Privacy Protection

GDPR Compliance

Pseudonymization and data minimization

Trade Secret Protection

Epistemic Key Rotation (EKR)

Storage Solutions

Merkle Trees

Efficient batching and verification

Asynchronous Anchoring

Decoupled blockchain integration

Latency Optimization Strategies

CUDA Stream Parallelization

Leverage separate CUDA streams for inference and governance tasks, allowing parallel execution without sequential bottlenecks. [598]

- • Dedicated streams for TML operations

- • Overlapped computation and logging

- • Optimized memory access patterns

TensorRT Optimization

Integrate TML operations into TensorRT's optimization passes for minimal performance impact. [626]

- • Fused kernel implementations

- • Mixed-precision computations

- • Layer optimization integration